There’s a voice inside most people’s minds that comes alive when they listen, read, or prepare to speak. This “internal monologue” is thought to support complex cognitive processes like working memory, logical reasoning, and motivation.1 In fact, inner speech continues to thrive in many individuals who are unable to speak owing to injury or disease.2 More than half a century ago, Jacques Vidal, a computer scientist at the University of California, Los Angeles, proposed the idea for brain-computer interfaces (BCIs); systems that could use electrical signals in the brain to control prosthetic devices.3

Since then, scientists have designed and developed BCIs that have enabled people with quadriplegia to control a computer cursor, a robotic arm, and even move their own limb. Recently, a person with amyotrophic lateral sclerosis (ALS)—a neurodegenerative disease—who had severe difficulties speaking, was able to carry out a freeform conversation with the help of a speech BCI.4 The neuroprosthesis accurately translated brain activity into coherent sentences while the person tried to speak to the best of their ability. However, the reliance on attempted speech can fatigue the user and limit communication speed.

To address this gap, Francis Willett, a neurosurgeon at Stanford University, and his team developed a speech BCI that could decipher imagined sentences.5 Their findings, published in Cell, enabled people with speech paralysis to audibly communicate by simply thinking about what they wanted to say in real time. “If you just have to think about speech instead of actually trying to speak, it’s potentially easier and faster for people,” said Benyamin Meschede-Krasa, a graduate student at Stanford University and coauthor of the study, in a statement.

The researchers recruited four participants enrolled in the BrainGate2 clinical trial, who had varying degrees of speech defects due to ALS or stroke and implanted devices to record neuronal signals from their motor cortex. Then, they asked the participants to either imagine speaking a set of English words or try to vocalize them. While both speech behaviors activated the same regions of the brain, the neuronal responses to inner speech were weaker than those produced by talking attempts. The team then trained a machine learning model on the neuronal signals linked to a library of imagined words to create the speech BCI. To test its performance, the researchers asked the study participants to imagine various cued sentences, all based on a vocabulary of 125,000 words, and observed that the neuroprosthesis could decode inner speech with an accuracy of 74 percent.

“This is the first time we’ve managed to understand what brain activity looks like when you just think about speaking,” said Erin Kunz, an electrical engineer at Stanford University and coauthor of the study. “For people with severe speech and motor impairments, BCIs capable of decoding inner speech could help them communicate much more easily and more naturally.”

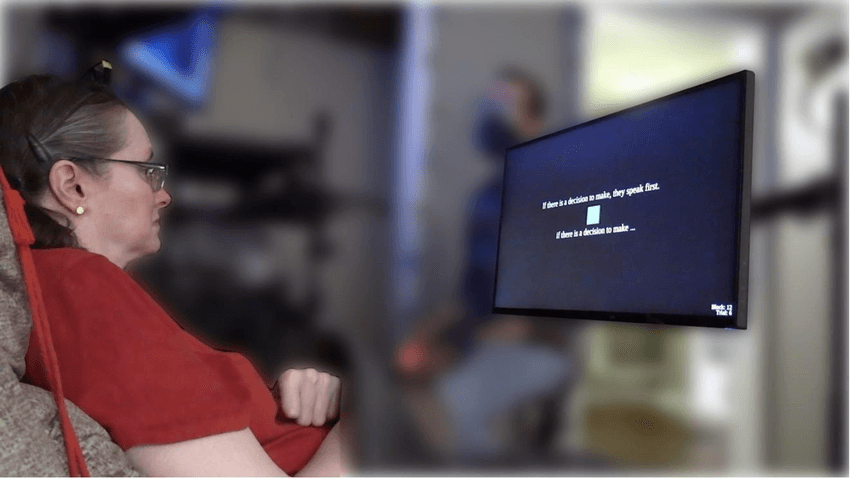

A patient with speech paralysis imagines a cued sentence (above) in her mind, which is decoded by a brain-computer interface in real time (below).

Emory BrainGate Team

But the real test was to see if the BCI could translate uninstructed freeform verbal thoughts. For this, Willett and his team showed two participants a stair-shaped pattern that they had to replicate using a joystick. They asked them to remember the shape either as a set of directions, using inner speech (up, left, up), or by relying solely on their visual memory. The speech BCI could decode the former, but not the latter. In another task, when the individuals counted the number of times a certain colored shape appeared in a grid, the BCI could decode the internal monologue of numbers, albeit with significant mistakes.

While the speech BCI offers substantial benefits to users, it also raises the possibility of unintentional decoding of private verbal thoughts. To prevent this, the team trained the system to detect the password “chittychittybangbang” with 98 percent accuracy. Users could unlock the system by simply vocalizing the phrase internally, followed by what they wished to say.

While Willette and his team acknowledged that the current system is not ready to accurately decode spontaneous thinking, improvements to recording technology could eventually make this a reality.

“The future of BCIs is bright,” Willett said. “This work gives real hope that speech BCIs can one day restore communication that is as fluent, natural, and comfortable as conversational speech.”

- Alderson-Day B, Fernyhough C. Inner speech: Development, cognitive functions, phenomenology, and neurobiology. Psychol Bull. 2015;141(5):931-965.

- Hurlburt RT, et al. Toward a phenomenology of inner speaking. Conscious Cogn. 2013;22(4):1477-1494.

- Vidal JJ. Toward direct brain-computer communication. Ann Rev Biophys. 1973;2:157-180.

- Card NS, et al. An accurate and rapidly calibrating speech neuroprosthesis. N Engl J Med. 2024;391(7):609-618.

- Kunz EM, et al. Inner speech in motor cortex and implications for speech neuroprostheses. Cell. 2025.